M2M Insider: M2M and IoT News, Trends and Analysis

- Written by Bill Gerba

- Published: 23 July 2015

The Internet of Things has a privacy problem. More specifically, a lack of privacy problem. It goes something like this:

1) Nothing on the Internet is private

2) IoT devices are, by very definition, connected to the Internet

Q.E.D. Devices on the IoT are not private.

There are certainly plenty of ways to make connected devices more private. Multi-layered security, consumer education programs and of course omitting personal data collection and utilization from IoT devices can all go a long way toward making such systems less prone to leak private data, but the long and short of the story is this: IoT devices aren't ever going to be 100% private and secure. Now we, as consumers and users of these systems, just have to decide what our collective tolerance for lack-of-privacy is. Or, more likely, what we're going to demand in exchange for giving up private data on purpose. Keith Winstein over at Politico has dubbed this the "right to eavesdrop on your things," and there's a good reason to worry: if history is any indicator, the collective "we" will be willing to give up plenty of rights for very little in return, and the not-so-collective I, and others like me, will not be able to do much about it.

But that's not to say that people aren't trying to get ready for it. Stanford has a secure IoT initiative that specifically references privacy plans, and plenty of industry groups are trying to stake out some position in the privacy arena, if for no other reason than they're terrified of government intervention. But if these efforts turn out to be anything like the successive attempts to establish a privacy code of conduct in the digital signage industry, a bunch of words will be generated by well-meaning industry folk, they will be completely ignored by those implementing the systems (and those who stand to profit from them), and everyone will cross their fingers and hope for the best.

- Written by Bill Gerba

- Published: 21 July 2015

Remember a few weeks ago when I was (sort-of-tongue-in-cheek) writing about IoT security being so bad that hackers could take control of any "smart" device out there, including cars? Well, as it turns out, in some cases they already can.

As WIRED tells us, some white-hat hackers have figured out how to compromise the M2M system used by hundreds of thousands of Chrysler cars on the road today, giving them the power to mess with climate control settings, change radio presets, and even turn the vehicle off while it's barreling down a highway at 70 miles per hour.

While GM and others have had systems like OnStar in place for well more than a decade now, those systems frequently combined proprietary software with (relatively) exotic hardware and network infrastructure, making it harder (or at least less worthwhile) for hackers infiltrate. Since Chrysler's approach uses the plain ol' Internet as a carrier medium, the target is both jucier and more accessible to hacker groups, whether they're wearing a white, black pr other-colored hat.

It's hard to imagine that a big company with so much to lose would pick anything other than de rigeur security standards like client certificate authentication and 2-way symmetric encryption of all data with some big cipher, but since the hackers have so far been mum about the exact nature of the hack, we can only speculate that there is some protocol violation or roll-your-own security vulnerability that they're exploiting.

My guess is that the hackers were able to gain entrance due to some glitch brought about because Chrysler uses the same cellular communications channel for both their M2M system and their infotainment unit. The company has obviously been tight-lipped so far, though does not appear to be particularly worried about the vulnerability (which will change after the first fatality, no doubt). Congress is due to start debating some new regulations for car IoT security in the coming weeks.

UPDATE: The company decided this might actually be a big problem after all, and has issued a recall notice for 1.4 million affected vehicles. This makes Wink's gaffe look like small potatoes in comparison.

- Written by Bill Gerba

- Published: 20 July 2015

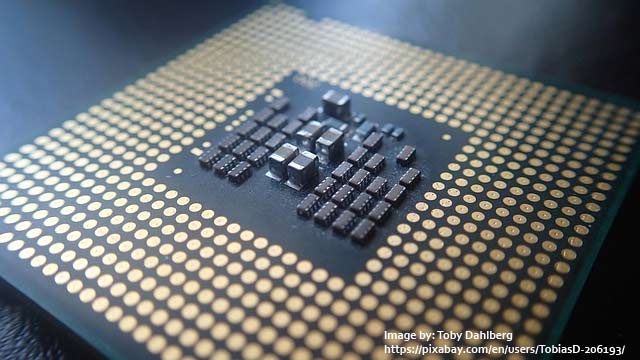

For years -- nay, decades -- now, Intel has worked tirelessly to keep up with Moores Law, which states that the number of transitiors on a CPU die (and thus, its performance) roughly doubles every 18 months. First noted by Intel co-founder Gordon Moore, the observation has proved remarkably accurate for over 50 years, and forecasted the remarkable growth in computing power that launched the PC revolution first and the Internet revolution later, and is now responsible for the gigaflops and gigaflops of computation power sitting inside the smartphone in your pocket. However, in recent years it has become more and more difficult for Intel and others to keep up with the "Law." With each process shrink, the zillions of tiny transistors get smaller, the current they leak becomes larger, and the cost of the equipment becomes greater.

That's why in the last half-decade or so Intel has adopted a "tick-tock" model where instead of switching to a new fabrication process with each new generation (and thus absorbing the enormous capex and complex technical challenge of doing so), they're instead doing that only every other time, and spend the other cycle making more mundane changes under the hood of their last-gen CPU. For the most part, this has worked well for Intel. They get a new set of products to sell, their marketing folks stay employed thinking of reasons to compel consumers to upgrade, and the market gets last year's chips for cheap, since inventories must be cleared.

There's another beneficiary in this scenario, though: non-Intel CPU manufacturers. And these days, they mostly make ARM chips. While flagship CPUs from big vendors like Samsung benefit from huge R&D investments and process advancements, most manufacturers and most generations of CPUs are still several process nodes behind where Intel is today. So while ARM cores might be smaller and more efficient than Intel cores (or they might not be, it's very hard to get a solid answer on that subject), it's undeniable that they'd be more efficient if built on a more recent process.

While semiconductor fabrication technology doesn't quite follow the same "race to the bottom" curve as other tech manufacturing equipment, process improvements to trickle down a bit, and previously high-end manufacturing improvements are starting to show up in even midrange ARM chips now. To us, the OEMs, ODMs and end-users, that takes the form of better equipment at lower prices, even at low volume tiers. IoT hubs that struggled to manage a few devices are suddenly more capable, and SoCs that could barely throw a few graphics on a screen while managing a device are starting to handle more complex tasks in the same power (and heat) envelope.

Subscribe to the M2M Insider RSS feed

Subscribe to the M2M Insider RSS feed